A few months ago, I started some tests with RRDTool and a data source in MySQL. At that time, I just saw that performance was awful but did not investigate further. Now is time to check why, and if any improvements could be made.

Convert existing data to SQL

First, let’s see how to convert existing data to MySQL. You would need ‘rrdtool dump’ + a tool that read data and insert it back to your database; The tool I wrote for this (in PHP) will be available in a few days on github (stay tuned). I’ll talk later about how to design your database, and what are the improvements you can make.

The key here is how you’ll import the data : do not use INSERT INTO statement, this will take too much time (insert buffer is mono thread in MySQL and very inefficient when dealing with large amount of data). use LOAD DATA [LOCAL] INFILE instead.

Graphing

rrdtool has support for libdbi, that supports several databases system (MySQL, PostgreSQL, SQLite, FreeTDS – i.e MS SQL and FireBird).

You need to change your command line of course. Here is an example.

BEFORE :

rrdtool graph - DEF:user=/path/to/src.rrd:user:AVERAGE:step=600 AREA:user#66ff00:"user"

AFTER :

rrdtool graph - DEF:user=sql//mysql/host=127.0.0.1/dbname=testrrd/username=myuser/password=pwd//data/*ts/val/idds=1:avg:AVERAGE:step=600 AREA:user#66ff00:"user"

Notes :

- please report to documentation to know the exact syntax and why I used these parameters.

- ts is prefixed with * because I use datetime format in SQL.

- DS is called « avg » because this is the aggregated function to be used. I select the REAL DS with the WHERE condition (« idds=1 »).

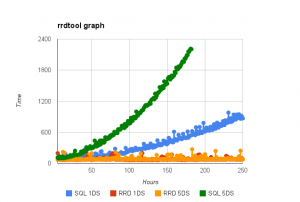

Performances

Performances are degraded, based on how many DS you used (one query to the DB will be made for each DS), and the length of the graph, exponentially. I wanted to know why such degradations. By looking at MySQL status, I can see that the query itself does not take more than 70ms, even if the graph itself needs nearly 2 seconds to be generated.

I wanted to know why such degradations. By looking at MySQL status, I can see that the query itself does not take more than 70ms, even if the graph itself needs nearly 2 seconds to be generated.

I then used oprofile to know what took so much time.

samples| %| ------------------ 14794 90.8443 libmysqlclient.so.16.0.0 441 2.7080 libc-2.12.so 277 1.7010 no-vmlinux 132 0.8106 libz.so.1.2.3 102 0.6263 libcairo.so.2.10800.8 91 0.5588 libpng12.so.0.49.0 88 0.5404 libfontconfig.so.1.4.4 79 0.4851 libdbi.so.0.0.5 58 0.3562 ld-2.12.so

libmysqlclient is the key here. But we need more information. Let’s print symbols with opreport (note : you would need not stripped libraries for this, and it can be quite a pain to find / generate).

samples % image name symbol name 14447 88.8281 libmysqlclient.so.16.0.0 mysql_data_seek 53 0.3259 libmysqlclient.so.16.0.0 _db_enter_ 33 0.2029 libmysqlclient.so.16.0.0 _db_return_

so much time for data seek … I’m sure we can do better, but it would need some specific skills. I’ll report it to the rrd-developers mailing list. Maybe someone may look into it.

UPDATE 2013-01-09

Problem is located in libdbi and implementation of dbi_result_seek_row(), that is using mysql_data_seek() everytime.

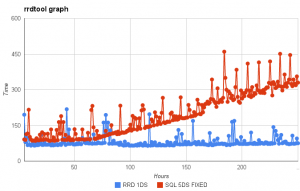

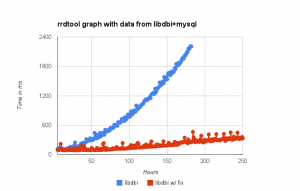

A patch was commited on CVS version of libdbi and libdbi-drivers today, and I tested it successfully. Here are performance with the patch :

or if you prefer, difference before and after the fix in libdbi :

Performances seems to be linear and are quite acceptable now. For your information, here is what take time when asking for 200 hours of data with 5DS :

| Action | Total time in ms | |

| SQL Queries | 60 | 5 queries (one for each DS) |

| Data retrieving / transformation | 100 | |

| Others | 80 |

I think I’ll patch rrdtool to add some benchmark timers to see if there is still place for optimizations (especially this data retrieving / transformation part).

I hope libdbi team will provide an official release soon. I’ll update this blog post with instructions to have latest libdbi at that time.

Hi,

very good how-to, just what I expected to.

Can you please add an how-to install or patch libdbi and libdbi-drivers, and which packages are required ?

Thanks a lot

Sorry, I don’t have much time and I wont promise something I wont do 😉 check libdbi official site (http://libdbi.sourceforge.net/) to find doc on how to compile it from source.

Version 0.9.0 has the patch for MySQL performance.

Olivier, do you have a link to the PHP code to do the MYSQL insertions?

Sorry, no code, you have to implement it yourself.